Try doing that with regex! (No, please don’t!) This prevents comment-spamming to drive SEO for the target page. JSoup has also added rel=”nofollow”, which tells search engines not to consider this link when calculating the target page’s importance. The onclick attribute has been removed from the tag, which prevents the XSS. There are a few others built in, or you can create your own custom one by extending this class or modifying an existing instance. I’ve used a preset called basicWithImages. Let’s use jsoup to fetch that doc and see what the title of the page is: It’s valid HTML5 according to the w3c html validator.

JAVA DOWNLOAD WEB PAGE HOW TO

Then we'll see how to build a real app which can fetch data from the web on-demand. We'll see a few examples of how to use jsoup, comparing how it interprets tag soup against Firefox.

JAVA DOWNLOAD WEB PAGE CODE

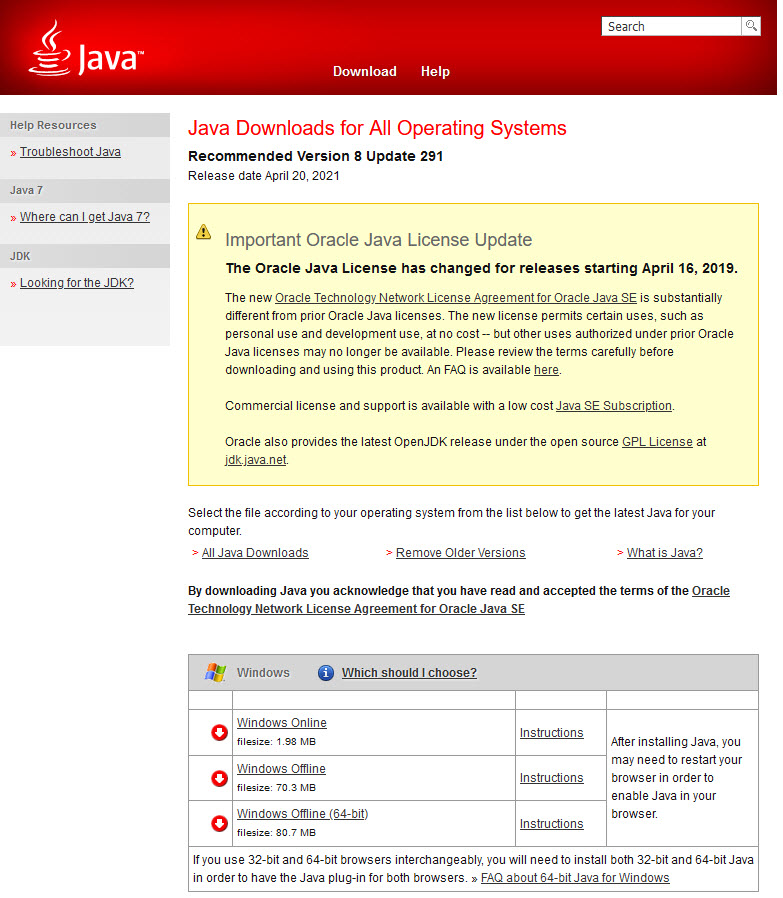

To run the code from my repo you will need to have Java 11 or later. There are good instructions at /download and I have put all the code used in this post in a GitHub repo which uses Gradle to manage dependencies. Jsoup is packaged as a single jar with no other dependencies, so you can add it to any Java project so long as you’re using Java 7 or later. jsoup will not run JavaScript for you - if you need that in your app I'd recommend looking at JCEF. You can also modify and write HTML out safely too. You can extract data by using CSS selectors, or by navigating and modifying the Document Object Model directly - just like a browser does, except you do it in Java code. Jsoup offers ways to fetch web pages and parse them from tag soup into a proper hierarchy. With tags and bits of tags floating around all over the place, this kind of document became known as Tag Soup, hence the name “jsoup” for the Java library. Misplaced tags like a inside the of a document.Mis-nested tags like This is mis-nested.Web browsers are therefore obliged to cope with: Good for them - this lowers the barrier for contribution on the web and makes it more resilient for all of us. The WHATWG, who design HTML, have consistently decided that compatibility with previous versions of HTML and with existing web pages is more important than making sure that all documents are valid XML. At the end there is a small app which deals with real-world HTML. You’ll see how to parse valid (and invalid) HTML, clean up malicious HTML, and modify a document’s structure too. To adopt the flexible and stylish attitude of web browsers, you really need a dedicated HTML parser, and in this post I’ll show how you can use jsoup to deal with the messy and wonderful web. Some non-XML constructs are perfectly valid HTML and admirably, browsers just cope with it. People open tags without closing them, they nest tags wrongly, and generally commit all kinds of XML faux pas.

The problem with this is that an awful lot of the HTML in the world is not valid XML. The author of that now-infamous text managed to recover from their distress enough to suggest using an XML parser (before, presumably, collapsing into the void). Have you tried using regular expressions? It won’t end well. Perhaps you are extracting data from a website that doesn’t have an API, or allowing users to put arbitrary HTML into your app and you need to check that they haven’t tried to do anything nasty? While ((line = reader.So, you need to parse HTML in your Java application. New FileWriter("data.html", StandardCharsets.UTF_8)) New InputStreamReader(url.openStream(), StandardCharsets.UTF_8)) īufferedWriter writer = new BufferedWriter( Import īufferedReader reader = new BufferedReader( When all the content are read from the stream and stored in a file close the BufferedReader object and the BufferedWriter object at the end of your program. To write it to a file create a writer using the BufferedWriter object and specify the file name where the downloaded page will be stored. This reader allows you to read line by line from the stream. Next, you can read the stream using the BufferedReader object. After the object created you can open a stream connection using the openStream() method of the URL object. You create a new URL object and pass the URL information of a page. The example below use the URL class to create a connection to the website. You want to create a program that read a webpage content of a website page.